In part 3 of our blog series on Dynamics 365 Finance and Operations data management and integration, we continue to explore various integration types using data entities. Dynamics 365 for Finance and Operations provides standard out of the box data entities across the modules that can be used as is or with applying extensions. However, you can also build custom entities to address your specific modification needs. The custom data entities need to be created within your development model and extensions.

Asynchronous integrations are used in business scenarios where very large amounts of data need to be imported / exported via files or in the case of recurring periodic jobs without compromising the performance of the system. Data entities support asynchronous integration through Data Management Framework (DMF). It enables asynchronous high-performing data insertion and extraction, and is used for:

- Interactive file-based import/export (Using DMF)

- Recurring integrations (file, queue, and so on) (Using DMF / OData)

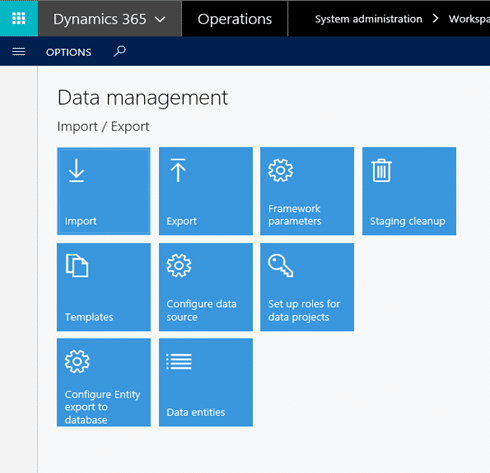

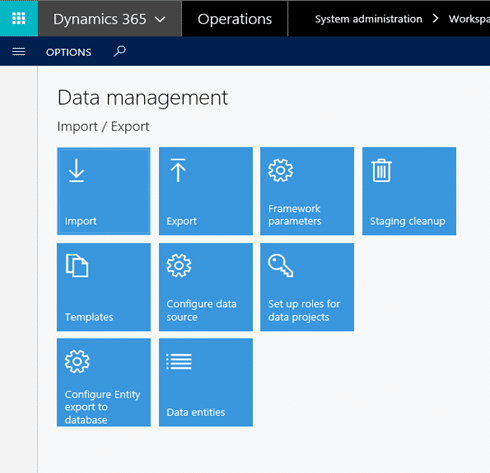

To access the Dynamics 365 Data Management Framework:

- Click on your Dynamics 365 URLàSystem administrationàData Management

The Data Management Framework components for performing the Asynchronous Integration are specified below:

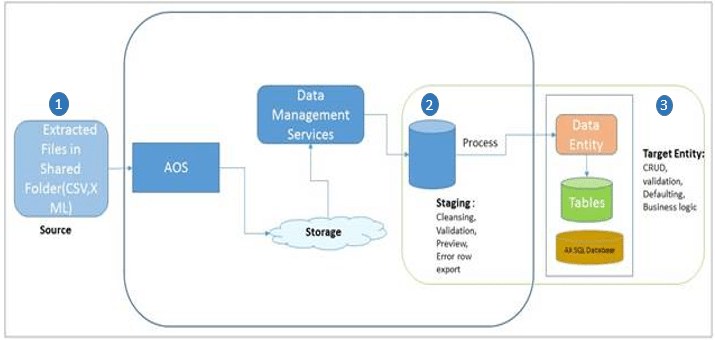

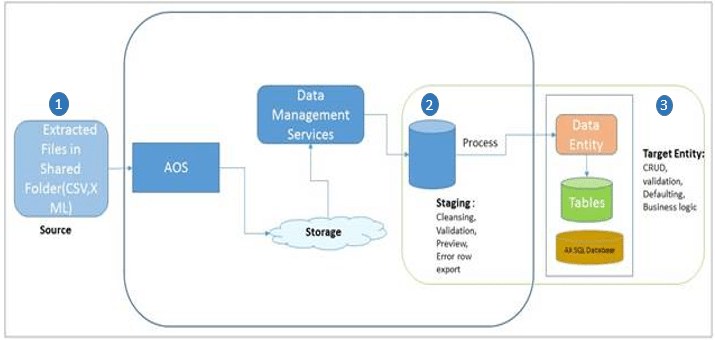

Data Import Export Framework is responsible for uploading files from shared folders, transform the data to populate them into staging tables, validate and map the data to the destination tables. This type of integration is particularly useful for doing the Bulk Data upload and will be considered for doing the one-time full load.

For One-time Full Load - Using the Data Import / Export Framework:

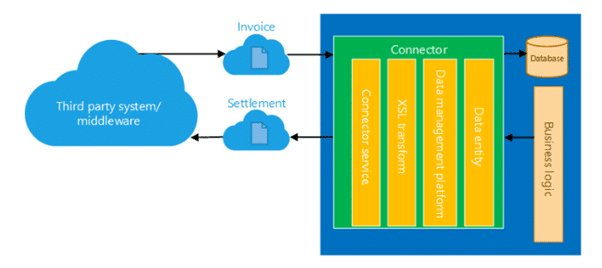

The above diagram depicts the overall architecture of the framework. The Data Import / Export Framework creates a staging table for each entity in the Microsoft Dynamics Finance and Operations database where the target table resides. Data that is being migrated is first moved to the staging table. Business users / decision makers can verify the data and perform any cleanup or conversion that is required. Post validation and approval of the data can be moved to the target table or exported.

The data flow goes through three phases:

- Source – These are inbound data files or messages in the queue. Data formats include CSV, XML, and tab-delimited.

- Staging – Staging tables are generated to provide intermediary storage, this enables the framework to do high-volume file parsing, transformation, and some validations.

- Target – This is the actual data entity where data will be imported into target table.

ODataAuth

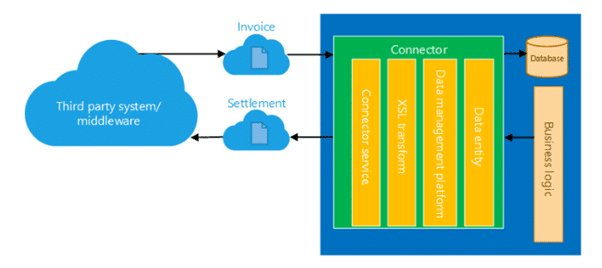

OData Integration uses a secure REST application programming interface (APIs) and an authorization mechanism to receive and send back data to the integration system, which is consumed by the data entity and DIXF. It supports single record and batch records. This type of integration is useful for doing the incremental / recurring uploads as it enables transfer of document files between Dynamics 365 and any other third party application or service further it can be reused / automated at the specified interval.

For Incremental / Recurring Integration - Using OData Framework:

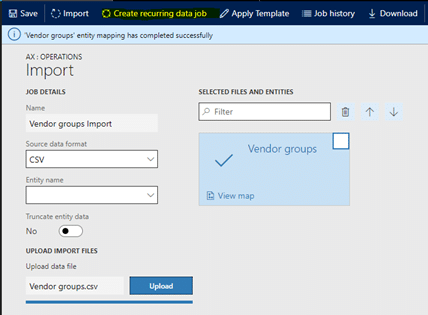

You need to follow these setups to configure the asynchronous one time and recurring integration jobs in Dynamics 365:

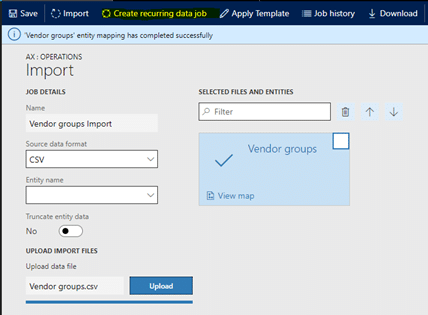

1. Create data project:

- On the main dashboard, click the Data management tile to open the data management workspace.

- Click the Import or Export tile to create a new data project.

- Enter a valid job name, data source, and entity name.

- Upload a data file for one or more entities. Make sure that each entity is added, and that no errors occur.

- Click Save.

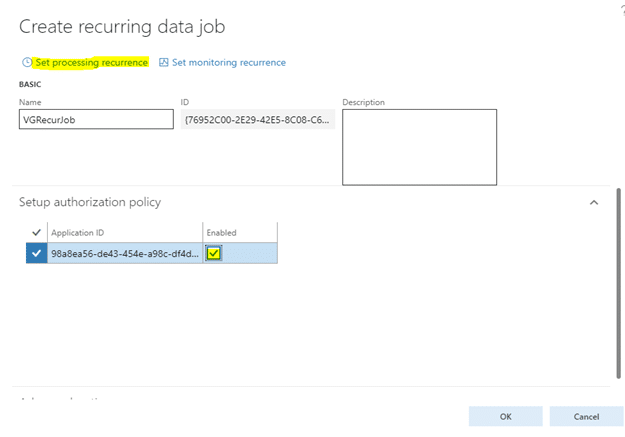

2. Create recurring data job

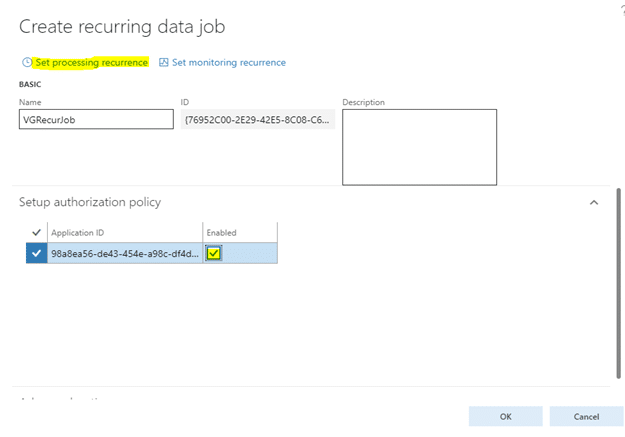

- On the Data project page, click Create recurring data job.

- Enter a valid name and a description for the recurring data job.

- On the Set up authorization policy tab, enter the application ID that was generated for your application, and mark it as enabled.

- Expand Advanced options, and specify either File or Data package.

- Specify File to indicate that your external integration will push individual files for processing via this recurring data job.

- Specify Data package to indicate that you can push only data package files for processing. A data package is a new format for submitting multiple data files as a single unit that can be used in integration jobs.

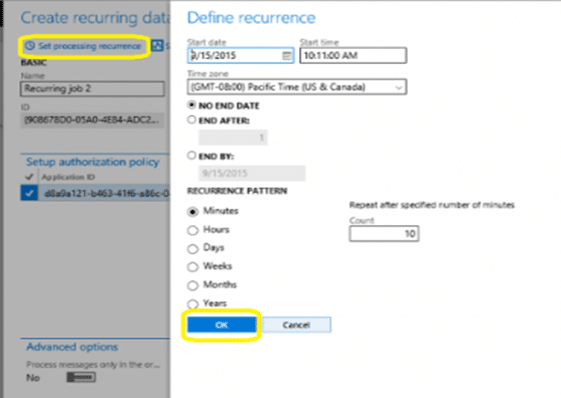

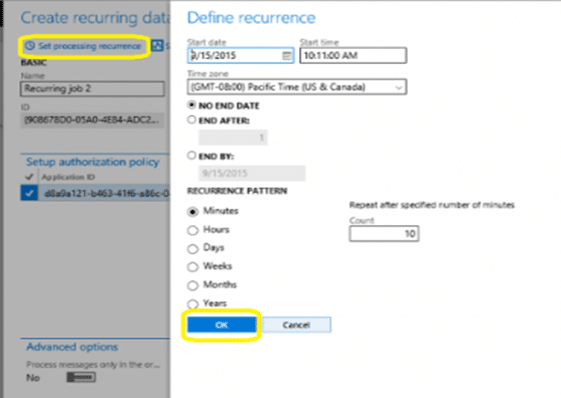

- Click Set processing recurrence, and set a valid recurrence for your data job.

- Click Set monitoring recurrence, and provide a monitoring recurrence.

- Click OK, and then click Yes in the confirmation dialog box.

There you have it! Stay tuned as we will continue to cover other the Data Entity Management and Integration scenarios around Business Intelligence, Common Data services and Application Life Cycle Management.

Subscribe to our blog for more guides to Dynamics 365 for Finance and Operations!

Happy Dynamics 365'ing!

How Microsoft Power Platform is helping to modernize and enable...

How Microsoft Power Platform is helping to modernize and enable... Deliver an Extraordinary Omnichannel Experience

Deliver an Extraordinary Omnichannel Experience Data Interoperability Key to Improving the Patient Experience

Data Interoperability Key to Improving the Patient Experience